Wan 2.7 Video API: Automating High-Quality Video Assets for Modern Web Design

The adoption of the Wan 2.7 Video API ecosystem marks a shift toward programmatic, high-precision video generation in 2026. For web design teams, this technology moves beyond static layouts to embed logic-driven motion directly into modern enterprise product architectures.

Streamlining Workflows with Wan 2.7 Edit Video API and Instruction-Based Editing

The revision phase is often the most resource-intensive part of a digital project. Traditional editing requires manual effort to adjust elements like lighting or textures, but the Wan 2.7 Edit Video API facilitates a programmatic approach through natural language commands.

Natural Language Style and Scene Adjustments

Designers can use instruction-based editing to modify existing footage—such as adjusting styles, scenes, or colors—without the computational overhead of re-generating entire sequences. This allows for rapid iterations where visual assets are “patched” rather than replaced, significantly reducing technical debt in the production lifecycle.

Non-Destructive Version Management via Wan Video API

By treating video segments as versioned assets that can be updated via simple API commands, organizations maintain a lean storage architecture. This streamlines the visual asset lifecycle in agile development environments, allowing teams to respond to feedback without the friction of traditional full-sequence rendering.

Transforming Static Assets via Wan 2.7 Image to Video API

One of the most efficient ways to enhance a website’s visual depth is to animate existing brand assets. Many clients possess high-quality static photography or professional mockups that remain underutilized in traditional layouts. The Wan 2.7 Image to Video API acts as a bridge for transforming these static enterprise libraries into high-fidelity dynamic assets.

Maximizing Portfolio Value with Wan AI API

By integrating this capability into a design workflow, teams can programmatically convert product photos into seamless cinematic loops for hero sections or full-page backgrounds. The API is designed to preserve the original resolution and intricate details of the source image while introducing natural, fluid motion.

Automated Consistency at Scale

This automation allows designers to maximize the value of a client’s existing portfolio, creating a cohesive visual narrative across a website without the overhead of a new video shoot. Ensuring that the transition from image to motion preserves the original brand’s detail is a critical requirement for professional-grade storytelling.

Maintaining Brand Integrity with Wan 2.7 Reference To Video API

A recurring challenge in automated content production is “identity drift,” where a subject or brand mascot loses consistency across different video clips. The Wan 2.7 Reference To Video API introduces a robust mechanism for maintaining subject integrity through a 3×3 multi-reference grid.

Unified Identity and Multimodal Integration

This technical infrastructure allows the API to ingest structural data from multiple angles simultaneously, locking in the character’s or product’s visual identity. This multimodal approach extends to auditory synchronization, facilitating the unification of appearance, motion, and voice.

Collaborative Asset Locking via Alibaba Wan 2.7 Video API

For digital agencies, this means that a specific brand persona can be reliably rendered in various environments and scenarios while maintaining the same facial features and motion patterns. By setting up “Golden References” within the API workflow, teams can ensure that every generated asset remains on-brand without the need for manual post-production.

Streamlining Client Feedback with Wan 2.7 Edit Video API

The revision phase is often the most time-consuming part of a design project. Traditional video editing requires significant manual effort to adjust specific elements like lighting, textures, or background colors. The Wan 2.7 Edit Video API facilitates a more agile approach through instruction-based editing.

Programmatic Revision Layers

Designers can send natural language commands to the API to apply visual patches or stylistic updates to existing footage. This non-destructive patching reduces the computational overhead on infrastructure and allows for sophisticated version management.

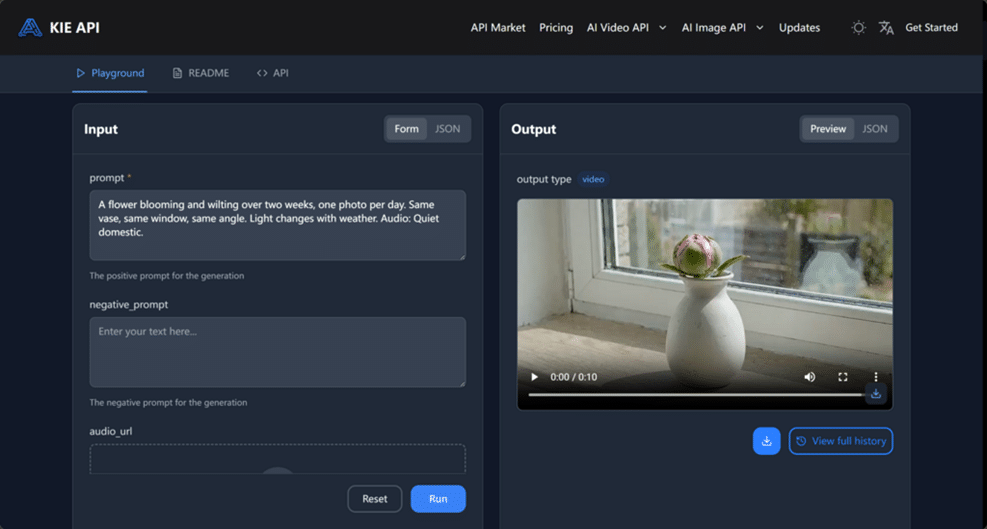

Analyzing the Unit Economics of Scaling Generative Video Infrastructure on Kie.ai

Integrating high-end AI capabilities into an enterprise product requires a clear understanding of inference latency and cost structures. Accessing these tools via Kie.ai ensures a high-concurrency environment designed for professional throughput.

Cost Architecture for High-Volume Production

The pricing model on Kie.ai is designed to support scalable operations. Standard 720p generation is priced at $0.08 per second, while 1080p high-definition generation is approximately $0.12 per second.

Optimized ROI through Advanced Top-Ups

To further optimize operational budgets, Kie.ai offers an advanced top-up model with a 10% bonus. This reduces the effective cost to roughly $0.072 per second for 720p and $0.108 per second for 1080p, allowing design leads to accurately forecast project expenses and demonstrate a clear ROI.

Conclusion: Structuring the Future of Web Motion with Wan AI API

The implementation of the Alibaba Wan 2.7 Video API signifies a transition toward a structured, engineering-led approach to visual content in web design. By resolving the persistent trade-offs between motion fidelity and production velocity, this suite of APIs allows design teams to treat high-precision video as a scalable UI component rather than an expensive luxury. As explored, the shift to logic-driven motion—supported by character consistency and instruction-based editing—provides a measurable framework for agencies to scale output without ballooning overhead.